BACK AFTER EXTENDED ROAD TRIP

I have been away from the shop for two weeks while my wife and I visited relatives and friends in Myrtle Beach South Carolina and Wilmington North Carolina. Along the way we spent a few days visiting Savannah Georgia for sightseeing. A quick jaunt to Raleigh North Carolina to visit a bar famous for elaborate Bloody Mary drinks rounded out the journey.

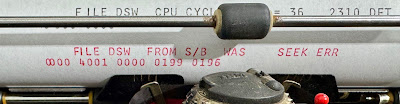

UNUSUAL DIAGNOSTIC CODE RAISED QUESTION ON TIMING

The new version of the typewriter diagnostic combines more than one movement command for the typewriter into a single output instruction (XIO Write), something that isn't discussed in any of the 1130 system manuals or that I have found in any other programs. This combined movement might result in a momentary rising edge on the -Twr CB Response signal before it drops again until all movements were complete. It might cause a falling edge on the +Twr CrLfT Interlock signal in the midst of an operation. If either happens, the edge would trigger a premature completion of the operation, allowing a new XIO Write to be issued while the mechanisms in the typewriter are still in motion.

FEEDBACK SIGNALS ON THE TYPEWRITER

The two feedback signals are implemented with strings of microswitches. Some movements, such as spacing or backspacing, activate a single switch. Carrier return and tab movements are not only long operations, but vary in duration based on how many columns are traversed. Therefore, each of those two types have a pair of microswitches that overlap to cover the entire duration of the movement. If the pair of microswitches are not properly adjusted, they might open a brief interval where movement appears to have completed, leading to issues when a program issues a new XIO Write prematurely.

The -Twr CB Response signal is formed with three microswitches in series. One covers the Space/Backspace/Tab operational clutch, the other two cover typing one character and shifting between upper and lower case hemispheres of the typeball. When any activates, the signal drops to 0 and then rises back to +48V when the switch closes.

The +Twr CrLfT Interlock signal is formed by four microswitches in parallel. It rises to +12V when any switch is activated and drops back to 0 when none of them is turned on. One switch covers the 360 degree rotation of the CR/Index operational clutch, during the time that this either latches up a carrier return or moves the platen in a line feed. Another switch covers the latching of the tab movement during the 180 degree rotation of the Space/Backspace/Tab operational clutch. A third switch is on the latched carrier return mechanism, so that from the time it begins moving leftward until the latch is released by striking the left margin, the switch is active. Finally, a switch covers the movement part of a tab. Tab movement continues for a variable number of columns, between the start point and the next set tab stop, so it has a microswitch that turns on as the carrier begins to slide rightward and turns off when it comes to a stop.

INVOLVEMENT OF SWITCHES IN MOVEMENTS

With the paired commands, there can have more than two switches involved. For example, if a backspace is combined with a carrier return, three switches participate and both signals send status. With a simple operation like typing a character, only the -Twr CB Response will be involved.

Since Carrier Return (CR) movement continues depending on how many columns it has to pass while moving, two microswitches control the +Twr CrLfT Interlock signal. The reason that a CR has a pair of microswitches is that we have one mechanism that is busy during the 360 degree rotation of the CR/Index operational clutch, which is the time needed to latch up the CR mechanism and then the second switch is active until the latch is released.

The reason that Tab has a pair of microswitches is that we have one mechanism that is busy during the 180 degree rotation of the Space/Backspace/Tab operational clutch. That covers the time needed to latch the pawls out of the way so the carrier can slide rightward. The latch of the pawls sets the second microswitch, so that it is only when the latch is released by banging into the set tab stop that we turn off that switch.

There is a switch on the Space/Backspace/Tab operational clutch, which participates in the -Twr CB Response signal, but another microswitch on the linkage that sets the tab latch. The second switch participates in the +Twr CrLfT Interlock signal. Thus during a Tab, both signals are active but start and stop at different times with some overlap. No other operation on the typewriter affects both signals - they activate one or the other depending on the operation type.

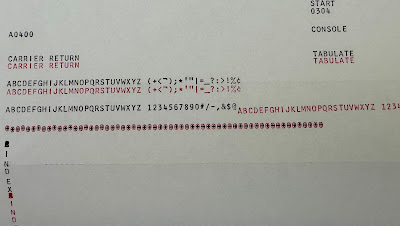

SCOPE OBSERVATIONS

I set up a manual XIO Write instruction to trigger the different movement commands, both individually and in the combinations seen in the diagnostic code. For each I observed the feedback signals on the oscilloscope. I compared those to the expected signal shape for each operation to identify where there are any switches that are maladjusted.

I found that the individual write commands were working as expected for most movement types, but that the tab and space movements were not activating on the typewriter. The feedback signals were correct for all the working movement types. I didn't initially try the combined movements from the diagnostic as I wanted to get the individual types all working correctly before doing any combinations.

The yellow trace is the XIO Write to the typewriter of a carrier return movement, the blue trace is the busy condition signal and the purple trace is the

+Twr CrLfT Interlock signal showing that the return movement is underway. By comparison, an XIO Write for a tab or space showed busy but neither the purple

+Twr CrLfT Interlock nor the green

-Twr CB Response signals move at all since no motion took place.

INVESTIGATING FAILURE OF TAB AND SPACE COMMANDS

The circuits from the 1130 controller logic to the typewriter solenoids were observed. Each will be at +48V until the relevant XIO Write value pulls a solenoid line to ground. This should cause it to pull down on a trigger and activate the typewriter movement function.

I saw the lines pulled to ground for both of the solenoids, yet the movement didn't trigger. One other thing I noticed was the duration of the grounded line to the solenoid was longer than the working solenoids such as carrier return. Of course, the feedback signals didn't change since the typewriter mechanism was not triggered to perform the tab or space. The difference in duration may be caused by the feedback signal arrival which might terminate the solenoid action; no feedback thus no termination.

CHECKING OUT SOLENOID ACTION FOR SPACE AND TAB

I set up to watch the solenoids when I attempted to write a command to tab or space. I want to find the cause of the failure to trigger the movements. There are several possible causes, with the resolution depending on exactly why the motion is not triggered.

The movement starts with a trigger that releases the left operational clutch which rotates 180 degrees before latching back to a stop. The left clutch powers the space, tab and backspace movement functions. A right 360 degree clutch powers carrier return and line feed movements.

The release of the clutch to allow it to turn is due to a trigger lever.

The left operational clutch has three trigger levers, one each for tab, space and backspace. These trigger levers can be moved by two different types of mechanisms - pushbuttons and solenoids. On an ordinary Selectric typewriter, a third mechanism based on keylevers is used instead. The pushbutton on the front of the console printer is connected via a cable to trip the trigger.

The trigger is also pulled down to activate the clutch by the operation of a solenoid. When it is energized, the armature pulls down on a screw link that moves the trigger.

Thus, the failure of the tab and the space triggers to activate the left operational clutch could be caused by several causes. The solenoid might be mechanically jammed and thus not move its armature down. The screw link might not move the trigger down far enough to cause the operational clutch to release. The cable from the pushbutton might be holding the trigger up so that the solenoids pull doesn't result in enough trigger movement to trip the clutch.

We know that the pushbuttons are triggering the tab and the space functions. We know that the backspace command from an XIO Write will trip the clutch and cause a backspace. What is failing is XIO write to request tab or space. I therefore watched to see what the solenoid activation did on this machine. Did the armature move? Did the screw link pull the trigger down? Is the cable from the pushbuttons stopping the trigger from moving down to trip the clutch?

ADJUSTMENTS MADE TO CORRECT THE ANOMALIES

Having found the root cause, I worked on a fix. It was important that both pushbuttons and solenoids worked for the two movements in question - tab and space - so I had some checking to do after each change.

The magnet unit above supports tab, backspace and line feed at the top row, space and carrier return at the bottom row. The armatures are arranged to form a line across the middle, with screw links up to the triggers for the tab, space, backspace, carrier return and line feed in that order left to right.

The screw links were adjusted to allow the armature to start moving before it pulled on the trigger yet move far enough to ensure it did trigger. That worked for the space function but I still had issues with the tab.

The screw link passes through a hole in the armature, then there is a metal cylinder under the armature and surrounding the screw link. Finally a nut at the bottom adjusts the length of the screw link. The metal cylinder has a gap on each side - armature and nut - which allows the armature to get up to speed before it starts pulling down on the screw link .

I adjusted the screw links for the two solenoids that were malfunctioning until they seemed to be reliably triggering. I did find interactions with the pushbuttons, so that I had to reset those adjustments with the front panel in place on the typewriter.